Decisions such as how much to save and how fast to withdraw assets are often based on broad-brush and unreliable averages for life expectancy.

Alex Tanzi

Bloomberg.com

Originally posted 1 DEC 24

For centuries, humans have used actuarial tables to figure out how long they're likely to live. Now artificial intelligence is taking up the task - and its answers may well be of interest to economists and money managers.

The recently released Death Clock, an AI-powered longevity app, has proved a hit with paying customers - downloaded some 125,000 times since its launch in July, according to market intelligence firm Sensor Tower.

The AI was trained on a dataset of more than 1,200 life expectancy studies with some 53 million participants. It uses information about diet, exercise, stress levels and sleep to predict a likely date of death. The results are a "pretty significant" improvement on the standard life-table expectations, says its developer, Brent Franson.

Despite its somewhat morbid tone - it displays a "fond farewell" death-day card featuring the Grim Reaper - Death Clock is catching on among people trying to live more healthily. It ranks high in the Health and Fitness category of apps. But the technology potentially has a wider range of uses.

Here are some thoughts:

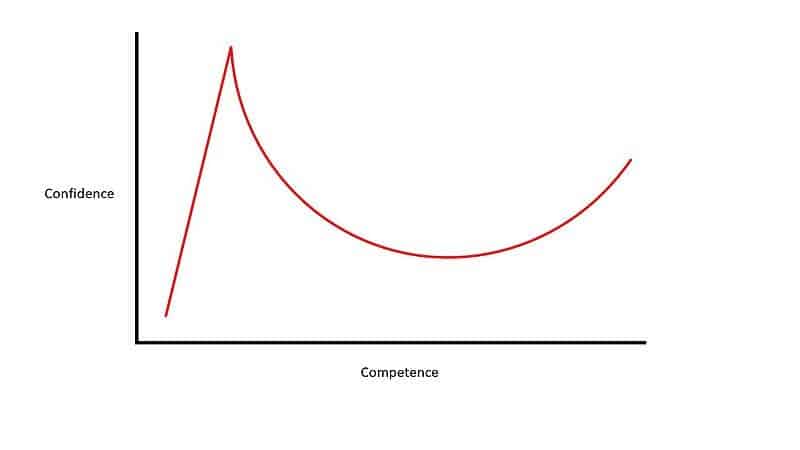

The "Death Clock" app raises numerous moral, ethical, and psychological considerations that warrant careful evaluation. From a psychological perspective, the app has the potential to promote health awareness by encouraging users to adopt healthier lifestyles and providing personalized insights into their life expectancy. This tailored approach can motivate individuals to make meaningful life changes, such as prioritizing relationships or pursuing long-delayed goals. However, the constant awareness of a "countdown to death" could heighten anxiety, depression, or obsessive tendencies, particularly among users predisposed to these conditions. Additionally, over-reliance on the app's predictions might lead to misguided life decisions if users view the estimates as absolute truths, potentially undermining their overall well-being. Privacy concerns also emerge, as sensitive health data shared with the app could be misused or exploited.

From an ethical standpoint, the app empowers individuals by providing access to advanced predictive tools that were previously available only through professional services. It could aid in financial and medical planning, helping users better allocate resources for retirement or healthcare. Nonetheless, there are ethical concerns about the app's marketing, which may exploit individuals' fear of death for profit. The annual subscription fee of $40 could further exacerbate health and longevity inequities by excluding lower-income users. Moreover, the handling and storage of health-related data pose significant risks, as misuse could lead to discrimination, such as insurance companies denying coverage based on longevity predictions.

Morally, the app offers opportunities for reflection and informed decision-making, allowing users to better appreciate the finite nature of life and prioritize meaningful actions. However, it also risks dehumanizing the deeply personal and subjective experience of mortality by reducing it to a numerical estimate. This reductionist view may encourage fatalism, discouraging users from striving for improvement or maintaining hope. Inaccurate predictions could lead to unnecessary financial or emotional strain, further complicating the moral implications of such a tool.

PS- The death clock indicates my date of death is October 3, 2050 (if we still have a viable planet and AI has not deemed me obsolete).