Laura Strickler & Stephanie Gosk

NBCnews.com

Originally posted July 23, 2023

Here is an excerpt:

The man who led the unit at the time, Dr. Brian Hyatt, was one of the most prominent psychiatrists in Arkansas and the chairman of the board that disciplines physicians. But he’s now under investigation by state and federal authorities who are probing allegations ranging from Medicaid fraud to false imprisonment.

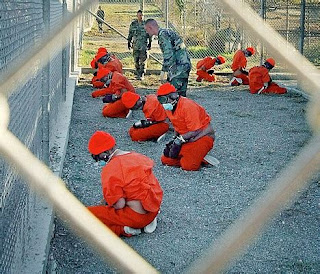

VanWhy’s release marked the second time in two months that a patient was released from Hyatt’s unit only after a sheriff’s deputy showed up with a court order, according to court records.

“I think that they were running a scheme to hold people as long as possible, to bill their insurance as long as possible before kicking them out the door, and then filling the bed with someone else,” said Aaron Cash, a lawyer who represents VanWhy.

VanWhy and at least 25 other former patients have sued Hyatt, alleging that they were held against their will in his unit for days and sometimes weeks. And Arkansas Attorney General Tim Griffin’s office has accused Hyatt of running an insurance scam, claiming to treat patients he rarely saw and then billing Medicaid at “the highest severity code on every patient,” according to a search warrant affidavit.

As the lawsuits piled up, Hyatt remained chairman of the Arkansas State Medical Board. But he resigned from the board in late May after Drug Enforcement Administration agents executed a search warrant at his private practice.

“I am not resigning because of any wrongdoing on my part but so that the Board may continue its important work without delay or distraction,” he wrote in a letter. “I will continue to defend myself in the proper forum against the false allegations being made against me.”

Northwest Medical Center in Springdale “abruptly terminated” Hyatt’s contract in May 2022, according to the attorney general’s search warrant affidavit.

In April, the hospital agreed to pay $1.1 million in a settlement with the Arkansas Attorney General’s Office. Northwest Medical Center could not provide sufficient documentation that justified the hospitalization of 246 patients who were held in Hyatt’s unit, according to the attorney general’s office.

As part of the settlement, the hospital denied any wrongdoing.