Rathje, S., Roozenbeek, J., Van Bavel, J.J. et al.

Nat Hum Behav 7, 892–903 (2023).

Abstract

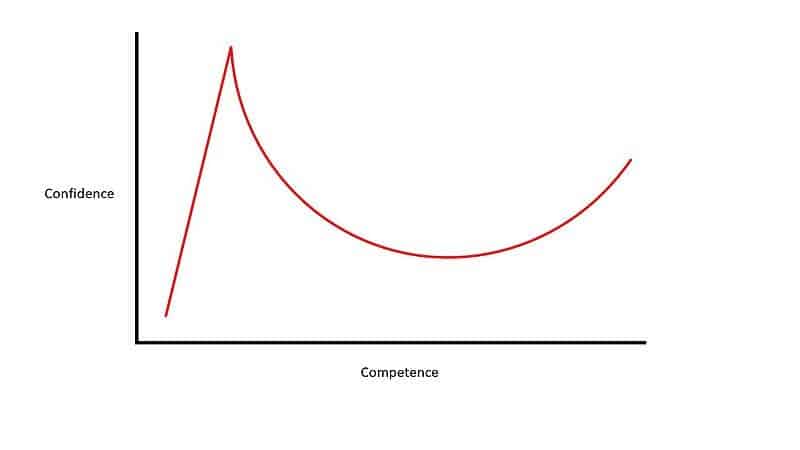

The extent to which belief in (mis)information reflects lack of knowledge versus a lack of motivation to be accurate is unclear. Here, across four experiments (n = 3,364), we motivated US participants to be accurate by providing financial incentives for correct responses about the veracity of true and false political news headlines. Financial incentives improved accuracy and reduced partisan bias in judgements of headlines by about 30%, primarily by increasing the perceived accuracy of true news from the opposing party (d = 0.47). Incentivizing people to identify news that would be liked by their political allies, however, decreased accuracy. Replicating prior work, conservatives were less accurate at discerning true from false headlines than liberals, yet incentives closed the gap in accuracy between conservatives and liberals by 52%. A non-financial accuracy motivation intervention was also effective, suggesting that motivation-based interventions are scalable. Altogether, these results suggest that a substantial portion of people’s judgements of the accuracy of news reflects motivational factors.

Conclusions

There is a sizeable partisan divide in the kind of news liberals and conservatives believe in, and conservatives tend to believe in and share more false news than liberals. Our research suggests these differences are not immutable. Motivating people to be accurate improves accuracy about the veracity of true (but not false) news headlines, reduces partisan bias and closes a substantial portion of the gap in accuracy between liberals and conservatives. Theoretically, these results identify accuracy and social motivations as key factors in driving news belief and sharing. Practically, these results suggest that shifting motivations may be a useful strategy for creating a shared reality across the political spectrum.

Key findings

- Accuracy motivations: Participants who were motivated to be accurate were more likely to correctly identify true and false news headlines.

- Social motivations: Participants who were motivated to identify news that would be liked by their political allies were less likely to correctly identify true and false news headlines.

- Combination of motivations: Participants who were motivated by both accuracy and social motivations were more likely to correctly identify true news headlines from the opposing political party.