Eli Hager

Originally posted 18 March 24

Diane Baird had spent four decades evaluating the relationships of poor families with their children. But last May, in a downtown Denver conference room, with lawyers surrounding her and a court reporter transcribing, she was the one under the microscope.

Baird, a social worker and professional expert witness, has routinely advocated in juvenile court cases across Colorado that foster children be adopted by or remain in the custody of their foster parents rather than being reunified with their typically lower-income birth parents or other family members.

In the conference room, Baird was questioned for nine hours by a lawyer representing a birth family in a case out of rural Huerfano County, according to a recently released transcript of the deposition obtained by ProPublica.

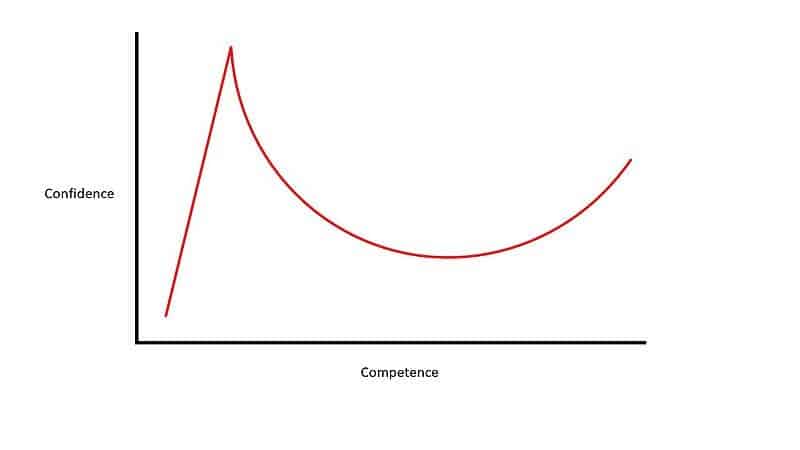

Was Baird’s method for evaluating these foster and birth families empirically tested? No, Baird answered: Her method is unpublished and unstandardized, and has remained “pretty much unchanged” since the 1980s. It doesn’t have those “standard validity and reliability things,” she admitted. “It’s not a scientific instrument.”

Who hired and was paying her in the case that she was being deposed about? The foster parents, she answered. They wanted to adopt, she said, and had heard about her from other foster parents.

Had she considered or was she even aware of the cultural background of the birth family and child whom she was recommending permanently separating? (The case involved a baby girl of multiracial heritage.) Baird answered that babies have “never possessed” a cultural identity, and therefore are “not losing anything,” at their age, by being adopted. Although when such children grow up, she acknowledged, they might say to their now-adoptive parents, “Oh, I didn’t know we were related to the, you know, Pima tribe in northern California, or whatever the circumstances are.”

The Pima tribe is located in the Phoenix metropolitan area.

Here is my summary:

The article discusses Diane Baird, an expert who has testified in foster care cases across Colorado, admitting that her evaluations are unscientific. Baird, who has spent four decades evaluating the relationships of poor families with their children, labeled her method for assessing families as the "Kempe Protocol." This revelation raises concerns about the validity of her evaluations in foster care cases and highlights the need for more rigorous and scientific approaches in such critical assessments.